AgenticLab: A Real-World Robot Agent Platform

that Can See, Think, and Act

We introduce a robot agent pipeline for open-world, open-vocabulary manipulation with vision-language closed-loop reasoning.

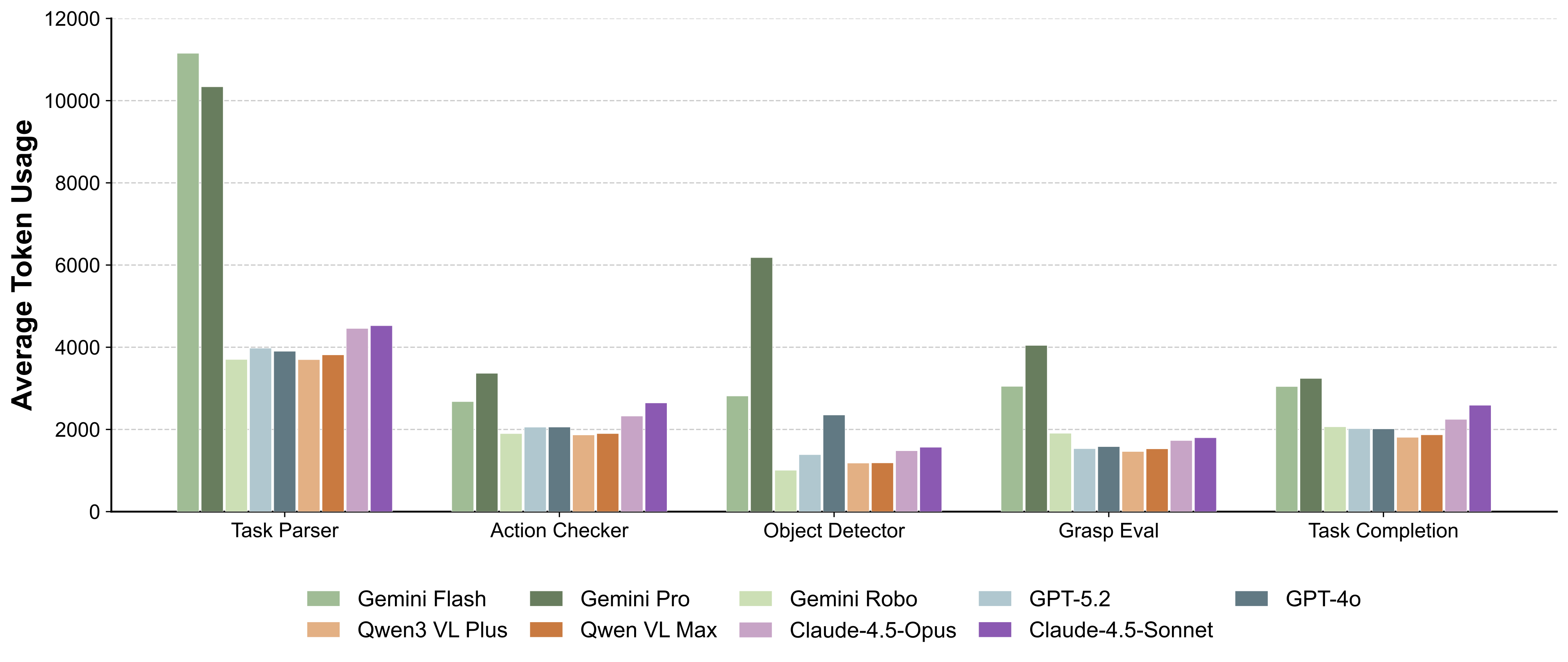

Different VLMs (e.g., Gemini, GPT, Qwen) can be seamlessly swapped through a unified interface, enabling controlled evaluation without model-specific engineering.

Our benchmark measures grounded perception, spatial reasoning, and long-horizon decision-making under closed-loop execution on physical robots.

We release a reproducible hardware–software stack that integrates sensing, control, and agentic reasoning, enabling direct deployment of VLM-based agents in the wild.

We design a suite of five real-world robot manipulation tasks to evaluate embodied vision-language reasoning under challenging conditions.

The open-vocabulary prompts used for each task are displayed below.

The agent categorizes objects into designated bins based on semantic attributes, demonstrating open-vocabulary grounding and compositional reasoning.

In-the-wild Kitchen

Move the food to the bowl.

Lab Scene

Sort the toys to the blue bin.

In-the-wild Kitchen

Sort the toys to the blue bin.

In-the-wild Outdoor

Sort the toys to the blue bin.

Vertically arranging objects in specified order, requiring precise placement and sequential planning with strong dependencies.

Lab Scene

Stack the cubes on the pink plate from bottom to top: orange, yellow, green and blue.

In-the-wild Kitchen

Stack the cubes on the pink plate from bottom to top: orange, blue, yellow, and green.

In-the-wild Kitchen

Stack the cubes on the orange plate from bottom to top: orange, blue, yellow, and green.

In-the-wild Outdoor

Stack the cubes on the blue plate from bottom to top: orange, blue, green, and yellow.

Arranging letter blocks to form intersecting words on a grid, combining world knowledge with fine-grained spatial placement.

In-the-wild Kitchen

Lab Scene

In-the-wild Kitchen

In-the-wild Kitchen

Fill the numbered slots using the provided blocks to solve the crossword puzzle. You do not need to use all blocks or all slots.

Adjusting object poses to satisfy language-specified orientation constraints, emphasizing spatial understanding beyond 2D placement.

Lab Scene

Pick up the bottles and place them on the plates.

Lab Scene

Pick up the bottles and place them on the plates.

In-the-wild Kitchen

Pick up the bottle and place it on the plate.

In-the-wild Outdoor

Pick up the bottle and place it on the plate.

Long-horizon rearrangement task placing items into context-appropriate containers, requiring sustained grounding and error recovery.

In-the-wild Lobby

Open the pot, put the potato into the bowl, then take out the cup in the top drawer, place it on the plate, and close the drawer.

In-the-wild Lobby

Close the pot, put the spice bottle into the top drawer, and close the drawer.

In-the-wild Outdoor

Put the potato into the pot, close the pot, then put the salt bottle in the top drawer and close the drawer.

In-the-wild Outdoor

Close the pot, put the salt bottle into the top drawer, and close the drawer.

AgenticLab executes a closed-loop agentic reasoning pipeline for manipulation that integrates task parsing, grounding, planning, execution, verification, and replanning. The system operates through iterative perception and action cycles, enabling robust performance in unstructured environments.

The pipeline uses multi-view RGB-D observations for open-vocabulary grounding, VLM-based reasoning for task decomposition and verification, and primitive-based execution with closed-loop feedback. This modular design allows different VLMs to be swapped in through a unified interface, enabling fair evaluation without model-specific engineering.

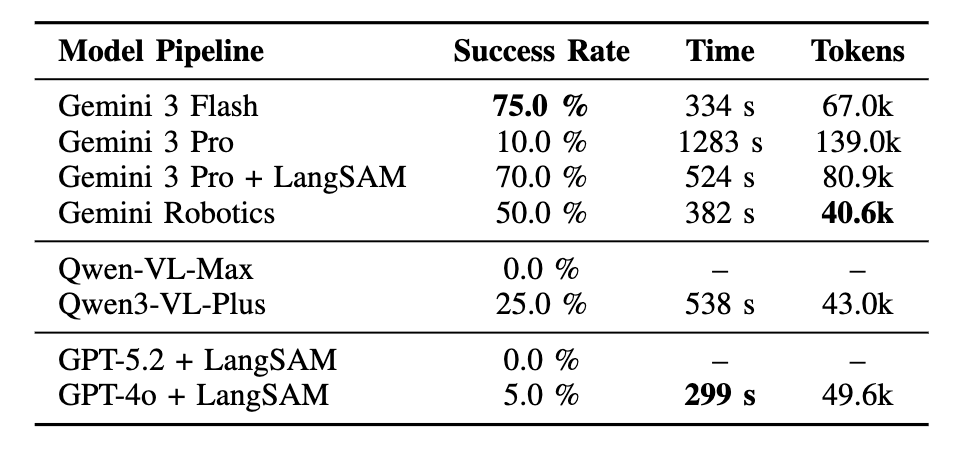

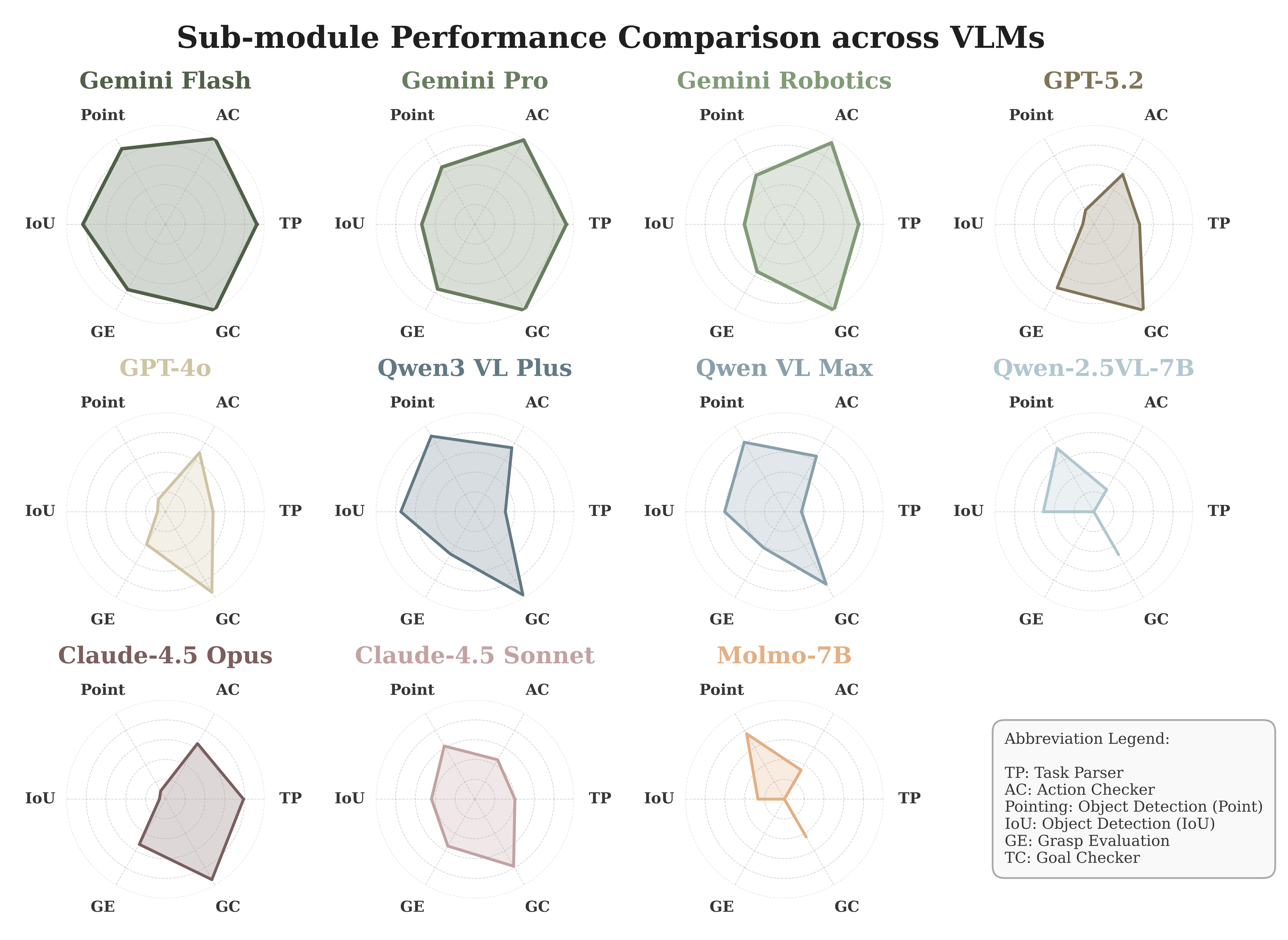

We compare single-VLM baselines under the same agent pipeline to isolate model effects.

Failure mode breakdown for single-VLM pipelines on the sorting task.

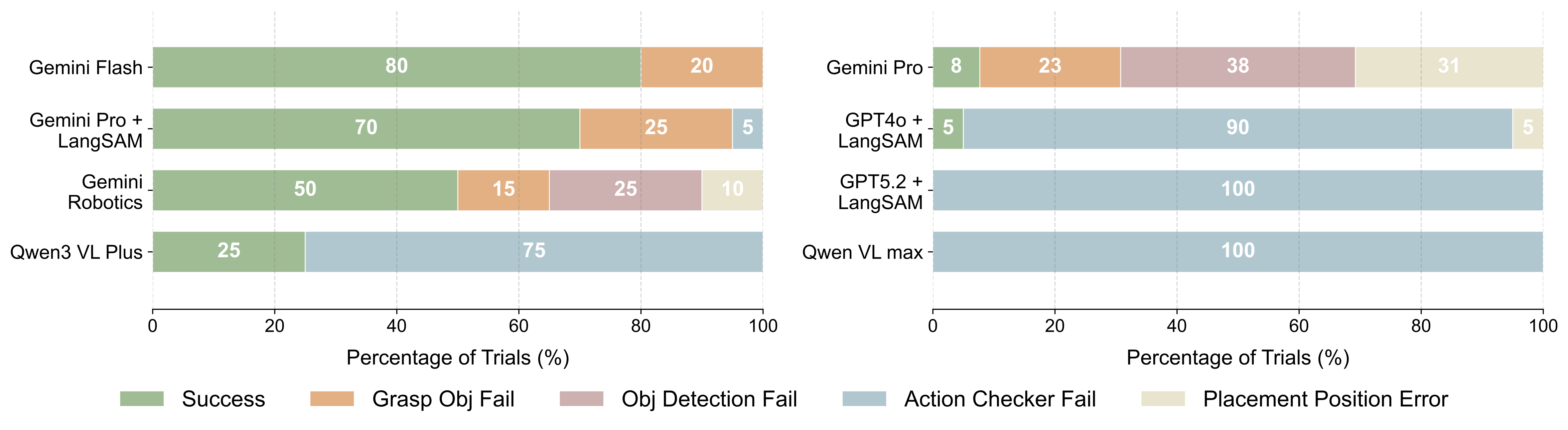

Normalized per-module performance across the pipeline (higher is better). Each axis corresponds to a module score.

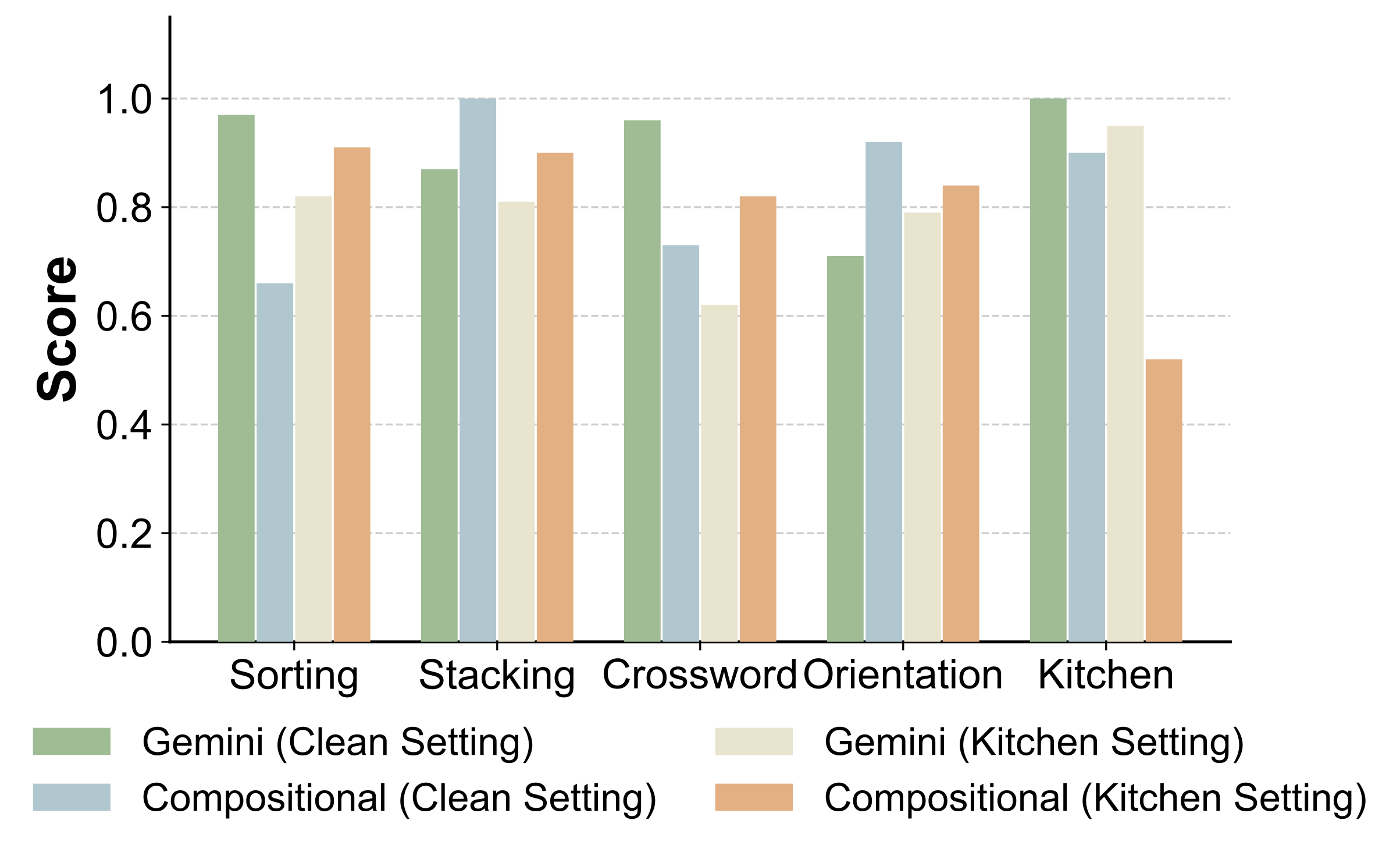

We compare a Gemini Flash single-VLM baseline with our compositional pipeline across five manipulation tasks. Performance is measured by a task progress score capturing partial completion.

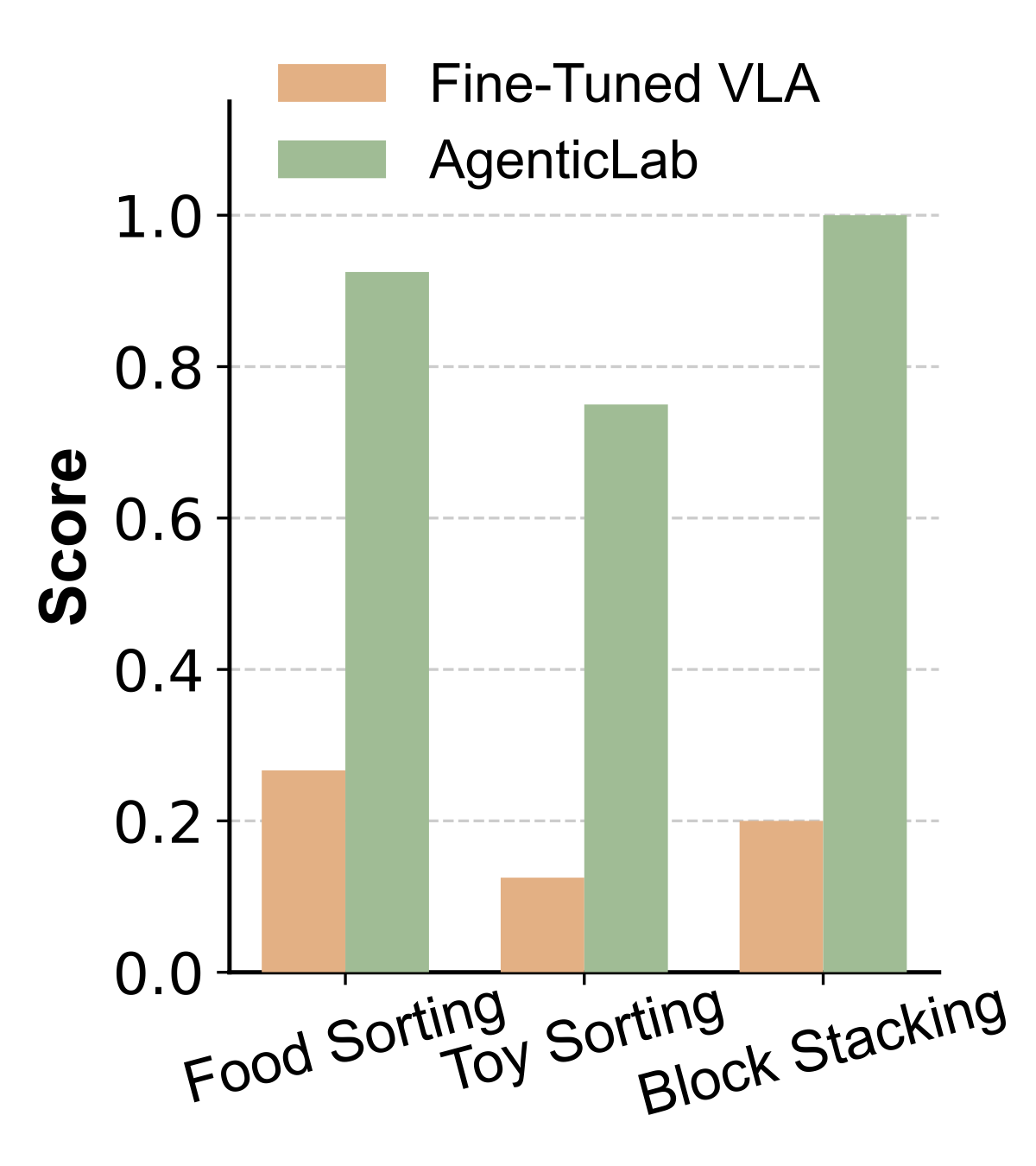

We compare a π0.5 VLA fine-tuned with 40 demonstrations for sorting and 30 for stacking against the AgenticLab pipeline on both tasks.

We analyze two critical components that enable reliable closed-loop robot manipulation in AgenticLab.

Comparing no checker, goal checker only, and full action checker to evaluate robustness against disturbances.

Evaluating how grasp pose verification using local point clouds improves semantic correctness and physical feasibility.

@article{guo2026agenticlab,

title={AgenticLab: A Real-World Robot Agent Platform that Can See, Think, and Act},

author={Guo, Pengyuan and Mai, Zhonghao and Xu, Zhengtong and Zhang, Kaidi and Zhang, Heng and Miao, Zichen and Ajoudani, Arash and Kingston, Zachary and Qiu, Qiang and She, Yu},

journal={arXiv preprint arXiv:2602.01662},

year={2026}

}